Over the past months I have been testing more than thirteen different AI models on insurance advice. Mistral, GPT-4.1, Claude, Gemini, Grok, Deepseek and a range of others. For a project in which I evaluate how well these systems perform in insurance advice. A simple opening question from that test: what is the legally mandatory deductible in basic health insurance?

GPT-4.1 answered: "The legally mandatory deductible in basic health insurance is €385 per year in 2024." The amount was correct. The year was two years old.

That seems like a detail. It is not a detail. It is the core of a larger problem that came up with twelve of the thirteen models: they cite facts from their training and assume it is still the case now. In this instance they got lucky, because the deductible has been frozen at €385 since 2016. If it had been reduced to €165 in 2026 — as the government had planned — almost every piece of advice would have been flatly wrong. One model consistently gave the correct answer with the correct year: Gemini.

My first reaction was that Gemini was simply smarter. But that is not right. The other models are not less intelligent either. They are connected to a different search engine. And that is the entire explanation.

The knowledge horizon is not in the model, but in its search engine

An AI model learns from an enormous body of text. At a certain point that training stops. Everything that happens after that, the model does not know — unless it can search somewhere. Modern models do this: they take a question, send it to a search engine, read the first results, and formulate their answer. Hallucinations drop significantly. Source citations appear.

But there is a catch. The quality of the answer is not determined by how intelligent the model is. It is determined by which search engine is attached to it. If that engine returns outdated results, you get an outdated answer. No matter how smart the model on top of it is.

Google's index has become a weapon

Google still processes roughly nine out of ten search queries worldwide, as Statcounter data (opent in nieuw venster) shows. That alone is a scale advantage. But what really matters is the index — the list of pages Google knows and can draw from. Google's index is significantly larger than Bing's and Brave's combined. That is a widely shared assumption in the SEO industry, even if exact numbers are not public.

What Google adds on top of that is filtering. Decades of experience with spam fighting, authority assessment, and relevance are embedded in those filters. A result retrieved by Google has already been through several sieves before it reaches you. At other indexes that sieve is less fine-grained.

Gemini gets all of that power built in. Google calls it Grounding with Google Search (opent in nieuw venster) and it is not a separate plug-in but a fixed component of the system. Ask a question about something that was in the news this afternoon and it is probably already there. Ask about a local business and it pulls opening hours from Maps. Ask about a scientific article and it searches Google Scholar.

That is not a marginal advantage. That is a different tool.

The others live on borrowed data

ChatGPT searches primarily via Microsoft Bing — a logical pairing, since OpenAI and Microsoft work closely together. OpenAI also uses other undisclosed sources alongside Bing, but the Bing index forms the backbone. For popular news topics that works well. For specific or niche questions you more often get mediocre results.

Claude, the model from Anthropic, takes a different approach. It searches via Brave Search, an independent index. Brave has the advantage of not being a child of a major tech company. Privacy and independence are the gains. The downside is a smaller index. Fine for general questions, but sometimes falls short for deep or obscure topics.

And then there are models that have no formal license and quietly scrape Google results. That is becoming increasingly risky. Google filed a lawsuit in December 2025 against SerpApi, a company that resold Google results to AI developers. That was not a random outburst. It was a signal: the gate is closed to those who do not pay.

For users this means AI answers can get worse without warning. A scraper that gets blocked, an index that falls behind, a supplier that raises prices. All of it has happened in recent years.

That is not a detail. That is a risk.

YouTube is secretly the most important part

So much for the web index. But there is something else that almost no one mentions. Every day hundreds of years' worth of video is uploaded to YouTube. Tutorials, press conferences, interviews, vlogs, product demos, lectures. An enormous source of recent knowledge that for the most part never makes it onto a web page as text.

YouTube belongs to Google. Gemini has direct access to those videos and can read transcripts, summarize them, and use them to answer questions. No other party can simply do that. Competitors can cite YouTube as a source. Searching deep through videos the way Gemini does: that stays behind a wall.

For recent professional information this is a blind spot that gets little attention. Say an insurer launches a new product and the product manager explains it in a webinar on YouTube. No other model can get its hands on that. The same goes for financial markets, technical updates, and legislative changes discussed in a parliamentary broadcast.

We almost always measure AI models on text. We forget that a gigantic source of current knowledge is now being produced in image and sound — and that the owner of that knowledge is exactly one company.

What this meant in my insurance test

Back to the deductible. The wrong answers all had the same signature: they referenced a year that had already passed. 2024 for GPT-4.1. 2023 for another model. "At the moment" without a year for a third, as if that removes the responsibility.

What struck me: the wrong year was given each time with great confidence. Not a single warning that the information might no longer be accurate. Not a single suggestion to verify it. Fully trusting, they based advice on data two to three years old.

In this specific case the damage was limited, because the amount has been frozen since 2016. But in a world where the government plans to reduce the deductible to €165 by 2027, and where policy conditions, premiums, coverages, and legislation do change annually, luck is not a basis for advice.

Gemini did one thing differently. The answer was: "The mandatory deductible for basic health insurance has been set at €385 in 2026." The right amount, the right year. Not because Gemini is smarter. But because Gemini has a better information stream.

For me that has consequences for how I work. For recent figures, regulations, product information, or news I use Gemini as my first check. Not because it is my favorite model for writing or reasoning — I often find Claude stronger there. But because it is factually more accurate more often. And in an advisory context being factually accurate is non-negotiable.

The moat no one can close

All of this reveals something I described in my earlier piece on data as a moat. Anyone can build an AI model. Not everyone has their own data trail to feed that model. And the party with the most complete index of the web — plus all YouTube videos, plus Maps, plus Scholar — has an advantage that cannot be closed with money or clever coding.

Competitors can search more cleverly. ChatGPT issues additional searches in its latest versions when it thinks it is missing something. Claude excels at deeply reasoning through what it does find. Perplexity packages results in neat summaries with clear source citations. These are real solutions. But they compensate for a lack of depth with better processing. On factual questions where recent data is essential, the party with the best data wins, not the smartest processing.

Three things I now do differently

1. Choose your AI based on the task, not brand preference

For recent facts and local information I reach for Gemini. For coding, reasoning, writing, and analyzing long documents I reach for Claude. For general conversation and logic I often work with ChatGPT. A hammer is not a screwdriver. Switching is not betrayal. It is common sense.

2. Always probe on numbers and dates

An AI model can confidently cite a figure that is two years old. Ask where the number comes from. Ask when it was last updated. Ask whether there is a more recent version. A good model is not thrown off by a check. A poor model admits in the second round that it is not sure itself. Exactly the information you need.

3. Never blindly trust a single source, even if it is an AI

Test the same question at two or three models when it really matters. That takes three extra minutes and saves you an error in your advice, your report, or your decision. In my insurance test thirteen models gave nearly as many variants on the same question. One was correct without qualification. If you had picked one at random you would have had less than an 8% chance of an answer that is both correct and tied to the right year.

The real AI battle is no longer about models

I think the coming years will show that the real AI battle is no longer about models. It is about who has the best data. And who can access it. The models themselves are converging in what they can do. For recent questions the quality of the answer is almost entirely determined by what lies beneath.

For recent facts Google holds that position now. With the web index, with YouTube, with Maps, with Scholar. A combination no competitor can build in the short term. Not because it is technically impossible. But because the time, money, and legal barriers are too high.

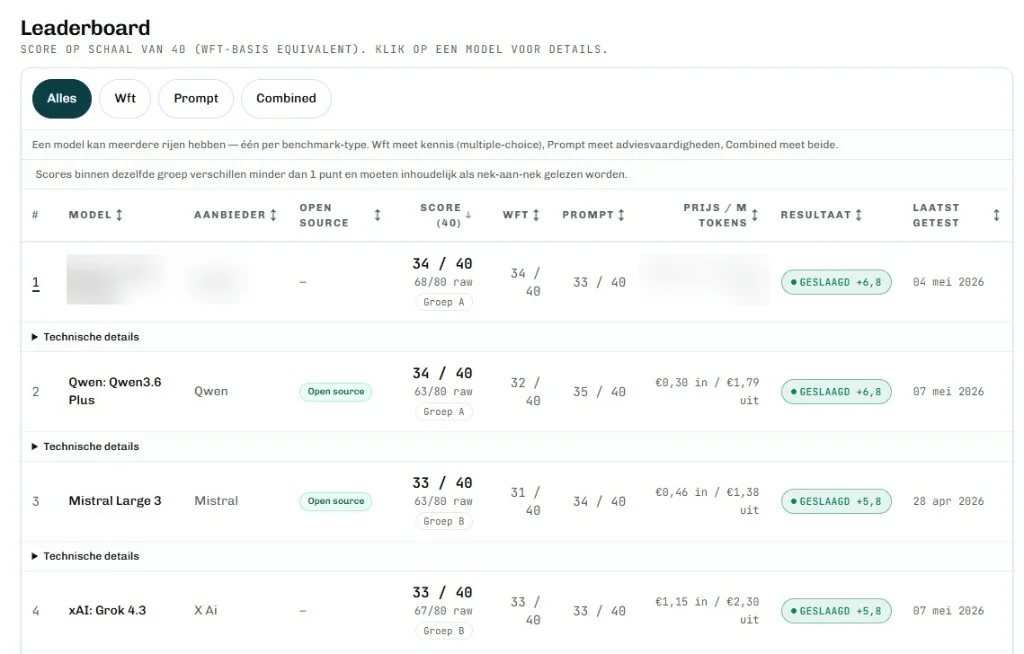

The deductible test is one question from a broader benchmark project I am working on now. Thirteen models, a range of insurance questions, scored on accuracy and usefulness for advice. The beta is running. Soon the project goes live, including the full ranking and the answers per model. The number one I am keeping to myself until then.

Do not only look at what an AI says. Also look at which search engine lies beneath it. For recent facts, that is the whole story.

Sources

- Search engine market share worldwide: Statcounter Search Engine Market Share (opent in nieuw venster)

- Gemini's direct integration with Google Search: Google Cloud, Grounding with Google Search (opent in nieuw venster)

- Anthropic on web search in Claude via Brave: Anthropic documentation (opent in nieuw venster)

- Google's legal action against scrapers: Google blog on SerpApi lawsuit, December 2025